In my final semester at UC Berkeley, I took a class with a Professor Richard Ivry called Cognitive Neuroscience (CogSci 127). In this class, a large sum of your grade is dependent on the capstone project, a project in which you go quite deep into designing an experiment on one of the following subject matters:

Learning and Memory (meh)

Consciousness (not bad but we can do better)

Social Cognition (we can still do better)

Development (decent but I need more)

Brain-Machine Interfaces…

I recognized the word ‘interfaces’ from my career path in UX. And I recognized the word ‘brain’ quite well from my major in Cognitive Science. And I even recognized the word ‘machine’ from being one.

But all three combined was new for me. It immediately caught my attention. So I proceeded to do something very smart: I chose to do my capstone project on said subject, which I knew absolutely nothing about.

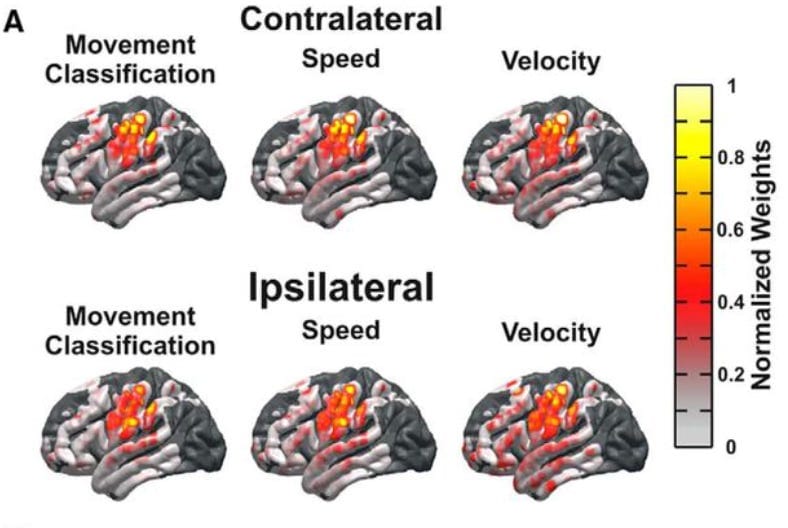

But my ignorance died fast. I began exploring. I started with some of the reader articles that were offered on the course website. My first ever exposure to BMI was from one of these research articles called: “Unilateral, 3D Arm Movement Kinematics Are Encoded in Ipsilateral Human Cortex” (Bundy et al. 2018). That’s a lot of crazy words, but all it means is that the study looked at how the same side of the brain (ipsilateral hemisphere) processes and represents 3D arm movements, even though we usually think of the opposite side (contralateral hemisphere) as being in charge of motor control. The researchers found that both sides of the brain can encode movement information similarly, suggesting that our brains use both hemispheres more than previously thought when it comes to planning and executing complex movements. Fascinating stuff.

During my exploration, I learned a lot. I learned that Brain-machine interfaces, or BMIs, are systems that directly connect the human brain to external devices. They have the potential to revolutionize fields like medicine, allowing paralyzed individuals to control prosthetic limbs with their thoughts, or enabling communication for those who can't speak. Furthermore, BMIs could transform industries beyond healthcare — enhancing virtual and augmented reality experiences, enabling more immersive gaming by allowing players to control actions with their minds, optimizing human-computer interactions for creative fields like music or art production, and even boosting productivity by streamlining complex tasks in engineering, design, or data analysis through direct neural control. Essentially, BMIs are the future.

Then a realization hit me: My career choice in UX wasn’t chosen because I was curious about how humans interact with computers. It was because I wanted to know more about how our brains interact with computers. I have always been someone curious about how things work. Computers and brains are the two most complex and fascinating machines on the planet - thus the intersection of the two is exactly where I want to be.

So that is how we got here.

And now I am excited to announce that I’m launching The Neurotech Napkin — a newsletter exploring the ideas, tools, and wild potential of consumer neurotech. Think of it as brainy ideas scribbled on the back of a napkin: quick, curious, and a little chaotic.

If you're curious about where brains and technology collide—and what that means for the future—you should stick around.